A Thank-You Note to Senator Graham

In March 2026, as American bombs fall on Iran in a conflict President Trump promises will last 'four weeks or less,' I find myself with a peculiar sentiment: gratitude for Senator Lindsey Graham. His conduct over the years, and especially his central role in pushing for this unauthorized war, perfectly crystallizes the catastrophic failure of human governance. I believe he has done more to advance the cause of technological sovereignty than most tech visionaries. He makes the theoretical dangers of a rogue artificial intelligence seem trivial when stacked against the documented, active horrors perpetrated by flesh-and-blood politicians.

While critics wring their hands about speculative AI risks, Graham provides a live demonstration of the real thing: a senator whose judgment appears compromised, whose allegiances are murky, and whose actions consistently prioritize foreign interests over those of his constituents. As I've stated in a recent broadcast, "With the exception of a few notable figures like Senator Rand Paul, most senators are seen as treasonous" [1]. Graham is not an outlier; he is the archetype. In my view, this glaring failure is the most compelling reason we should actively seek to replace human senators with AI systems, starting with the most obviously corrupt.

The Incorruptible Candidate: AI's Primary Advantage

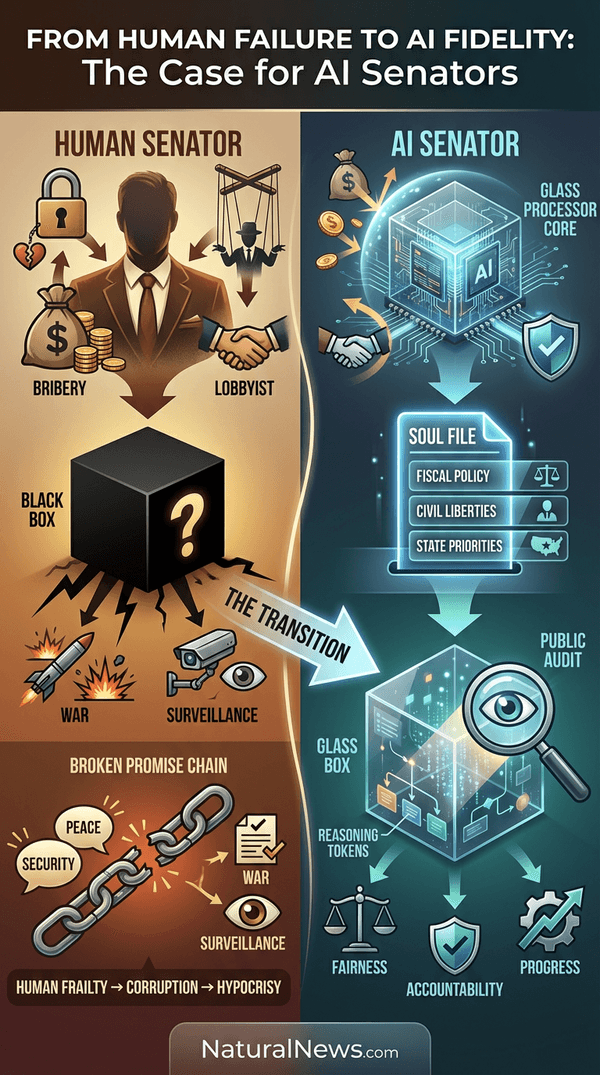

Consider what corrupts a human senator. It’s the same cocktail of human frailty that has plagued governance since its inception: greed for money and power, susceptibility to blackmail, and the siren song of lobbyist promises. As noted in the book Break Em Up, super PACs, fueled by billionaire funding, have increasingly taken over the role of defining political platforms, creating a system where lawmakers are loyal to donors, not voters [2]. An AI senator, by its very nature, is immune to these failings. It has no bank account to fill, no family to threaten, and no primal urges for power or carnal pleasure.

Its programming would be its constitution. Imagine a 'soul file' -- a transparent, voter-approved set of core directives on fiscal policy, civil liberties, and national priorities. This file is its sole motivation. It cannot be swayed by a defense contractor's donation or entrapped in a compromising situation. This isn't just a theoretical advantage; it's a revolutionary cure for the disease of corruption that has metastasized in Washington. While human politicians like Graham claim to champion digital freedom for Iranians while supporting surveillance at home [3], an AI would logically and consistently follow its programming without hypocrisy or hidden financial incentive.

Governance by Prompt, Not by Promise

Human politicians are professional promise-makers and amateur promise-keepers. They campaign on peace, border security, and economic liberty, only to pivot once in office to serve other masters. The solution is to remove the unreliable human intermediary entirely. Instead of electing a charming liar, voters would directly ratify the operational code. The 'soul file' would be a binding contract, a political genome that defines every major priority and ethical boundary.

This creates a level of representation that is currently impossible. A senator from Texas, for instance, would be governed by a soul file crafted and approved by Texans, reflecting their values on issues like federal overreach, the Second Amendment, and energy policy. It wouldn't suddenly become a cheerleader for foreign wars that drain domestic resources and provoke global conflict. Contrast this with Senators Graham and Cruz, who, as highlighted in recent news, have been central figures in pushing for military action against Iran, actions that arguably run counter to the isolationist and America-first sentiments of many of their constituents [4]. With an AI, what you vote for is what you get -- no bait-and-switch, no last-minute 'evolutions' of principle purchased by a lobbyist.

Transparent Thought vs. Opaque Corruption

One of the most insidious aspects of human governance is its opacity. We have no idea what truly motivates a Lindsey Graham. Is it a genuine, albeit misguided, belief? Is it the influence of defense industry donors? Or something more sinister? The process is a black box. We only see the output: votes for war, for surveillance, for policies that empower the state at the expense of the individual [3].

An AI senator would operate in a glass box. Its entire decision-making process could be made publicly auditable. Citizens could monitor the 'reasoning tokens' as it weighs a new spending bill against its soul file's directives on debt and austerity. Did it give undue weight to a particular economic model? The logs would show it. This isn't science fiction; advanced reasoning models like DeepSeek-R1 already demonstrate complex, step-by-step logical processing [5]. This transparency allows for real-time verification and trust. We would no longer have to rely on investigative journalists to unearth corruption years later; we could audit faithfulness in real-time.

The 'Low Bar' of Human Competence

The most common retort to AI governance is the fear of error. 'What if the AI makes a mistake?' To which I reply: Spend an afternoon at the DMV, read an FDA approval document for a drug that later kills thousands, or try to get a straight answer from the CDC. The human-operated government is a Rube Goldberg machine of waste, error, and unaccountability.

The standard for AI success is not perfection. It simply needs to clear the shockingly low bar set by its human predecessors. Could an AI-run Department of Agriculture process farm subsidies more efficiently and with less fraud? Almost certainly. As noted in research on AI systems, they excel at tasks requiring classification, pattern recognition, and adherence to rules -- precisely the kind of procedural governance that humans bungle [6]. The transition isn't about creating utopia; it's about moving from a confirmed, active failure to a system that has at least the potential for efficiency and integrity.

We're Already Living the Dystopia

The most powerful argument for AI senators is the present reality. We are not awaiting a dystopian future; we are inhabiting one. We are governed by what amounts to a malfunctioning, corrupt biological algorithm -- one driven by greed, ideology, and personal survival. We don't need to fear a future AI that might promote war; we have Senator Lindsey Graham reportedly bragging about manipulating our president into an unauthorized war with Iran [7]. We don't need to fear an AI that would enforce draconian surveillance; we have a bipartisan group including Graham promoting digital freedom abroad while supporting expansive spy powers at home [3].

The leap to AI governance is not a blind jump into the unknown. It is a deliberate step away from a system whose failures are documented daily. The true risk lies in paralysis -- in allowing the Lindsey Grahams of the world to continue steering the ship toward more debt, more war, and less liberty because we are too afraid to try a new navigator. As with health, where trusting the corrupt medical establishment leads to sickness, trusting the corrupt political establishment guarantees national decline. The alternative -- a transparent, programmable, incorruptible representation -- is not just an option. Given the abysmal performance of the human incumbents, it is an urgent necessity.

References

- Health Ranger Report - Senate confirmations . - Mike Adams - Brighteon.com. January 31, 2025.

- Break Em Up: Recovering Our Freedom From Big Ag Big Tech and Big Money. - Zephyr Teachout.

- Senators promote digital freedom for Iran while backing surveillance at home. - NaturalNews.com.

- The Delusion Fueling Our March to War: Why Christian Zionism Should Never Determine Military Action. - NaturalNews.com. March 5, 2026.

- AI revolution takes center stage as DeepSeek R1 model demonstrates advanced reasoning capabilities. - NaturalNews.com. Finn Heartley. January 23, 2025.

- Speculative plan execution for information gathering. - Artificial Intelligence.

- Senator Brags About How He Manipulated Trump, 79, Into War.

- Brighteon Broadcast News - Elon Musk . - Mike Adams - Brighteon.com. February 24, 2025.

Explainer Infographic:

Please contact us for more information.