Advertisement

On August 1, 2017, the YouTube blog provided an update on their Orwellian censorship policies, where under the guise of updating users on their “commitment to fight terror content online,” they will be implementing full Nazi fascist tactics to hide content that is “controversial but do not violate our policies,” by using “cutting-edge machine learning technology designed to help us identify and remove violent extremism and terrorism-related content in a scalable way.”

(Article by Susan Duclos republished from AllNewsPipeline.com)

Tougher standards: We’ll soon be applying tougher treatment to videos that aren’t illegal but have been flagged by users as potential violations of our policies on hate speech and violent extremism. If we find that these videos don’t violate our policies but contain controversial religious or supremacist content, they will be placed in a limited state. The videos will remain on YouTube behind an interstitial, won’t be recommended, won’t be monetized, and won’t have key features including comments, suggested videos, and likes. We’ll begin to roll this new treatment out to videos on desktop versions of YouTube in the coming weeks, and will bring it to mobile experiences soon thereafter. These new approaches entail significant new internal tools and processes, and will take time to fully implement.

In other words ladies an gentlemen if political commentary, or religious commentary, is considered non-compliant with the ideology of the groups they are using to “train” their bots, which they described as “expert NGOs and institutions through our Trusted Flagger program, including the Anti-Defamation League, the No Hate Speech Movement, and the Institute for Strategic Dialogue,” those videos will be hidden, there will be no ability to “share” them using the icons meant for that purpose, the creators won’t be able to monetize them, people will not be able to “like” them, nor have the ability to comment on them.

If that isn’t going full fascist Nazi on Independent media, what would be?

They list three specific ways they claim they have seen “positive progress.”

The first is listed as “Speed and efficiency,” where it states the following: “Our machine learning systems are faster and more effective than ever before. Over 75 percent of the videos we’ve removed for violent extremism over the past month were taken down before receiving a single human flag.” The second is listed as “Accuracy,” where they claim “The accuracy of our systems has improved dramatically due to our machine learning technology. While these tools aren’t perfect, and aren’t right for every setting, in many cases our systems have proven more accurate than humans at flagging videos that need to be removed.”

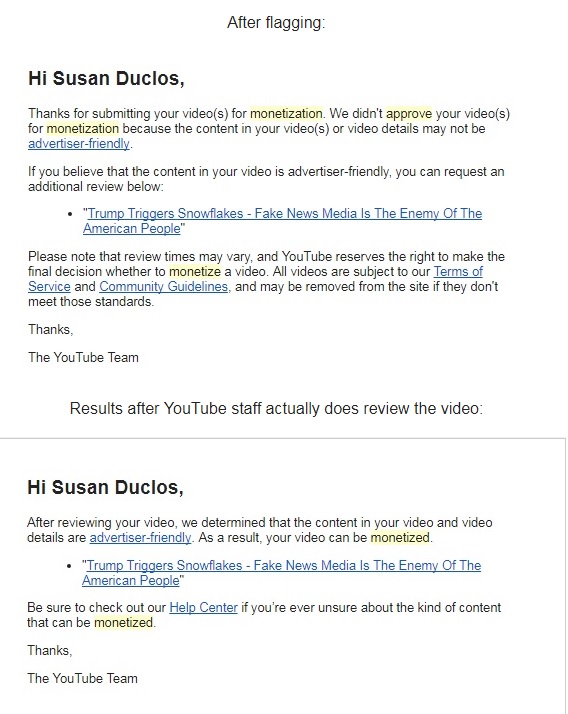

We will stop here for a minute to address that “accuracy” claim, which we highlighted in a previous article, with screen shots of how “inaccurate” their little AI flagging bots or human flaggers are, as after appeal, video after video on ANP’s channel were remonetized after a human reviewed it.

One example shown below:

So much for their flagging “accuracy,” as we have saved all the YouTube notification emails where a video is flagged, despite no graphic, violent or sexual content, no swearing, nothing but political commentary, just to have that over-turned when a human being finally reveiwed it.

IT GETS WORSE

YouTube has also decided that if a user searches for something specific that they have flagged as “sensitive keywords” they will now redirect those search results “towards a playlist of curated YouTube videos that directly confront and debunk violent extremist messages.”

This YouTube update has not gone over well from users or creators, as seen in some of the comments below their blog post on this topic, with one saying “These are the actions of people who fundamentally do not believe in human freedom. They are not content with allowing people to choose for themselves, they have to impose a narrative on them. Or at least try. I suspect if I wanted to I could find lots of videos that were very offense to me, but I don’t go looking for them. Everyone has that same ability. I don’t look for Pro-ISIS, Pro-Islam content. I don’t go looking for videos from Communists and Marxists. It doesn’t interest me, but they should be allowed to make them. But the same is not offered to the other side.”

Another user gets right to the heart of the issue when they state “In other words, if you are not a social justice warrior, your videos will be restricted. Censorship = making YouTube safer for all.”

More reactions include “Whats worse is if you search for content you want but Google and the “Creators for Change” think your opinions are shit you’ll get a wave of propaganda sponsored by governments in league with Google, corporate partners, advocacy groups, and Youtube’s chosen bigots!”

Heddy McCrab: This is the particularly egregious part, right here. Not only are users no longer capable of exercising their brains to choose what content they want to share and comment on, but if you happen to harbor an idea that Master Google Upon High deems ‘problematic’, they’ll spam you with re-education center propaganda.

Then they’ll put you on a list with all the other ‘problematic’ people and sell it off to the media and would-be fascists they’ve recruited to serve as ‘experts’ in detecting offensive content.

That’s assuming, of course, that they don’t just deactivate your account and e-mail address like they just got caught doing earlier.

Gwen Patton: Please don’t go down this road. It’s disturbing and will not end well. It’s going to be abused and misused by people who want to forward their own agendas. All you’re doing is creating a PC, extreme-left, propagandizing echo chamber.

John Smith: that sh*t is called censorship. that is suppression of opposition and destroys freedom of speech. but of course google only cares about these things when it favors them. calling the right fascist while using the fascists methods themselves. truely disgusting

Readers can scroll down at the YouTube post to see the rest of the comments, each and every one against Google/YouTube’s fascist tactics, but fair warning, some are very colorful with their language.

We would recommend readers politely weigh in for themselves, letting YouTube know what you think of their censorship practices.

WHAT YOUTUBE IS ALLOWING IS SICKENING

Two articles just sent to me by Stefan shows that while YouTube is busy censoring anything they consider “extremists,” such as conservative political commentary and criticisms against the MSM, they are allowing channels like “Tween” which caters to Pedophiles, one of which was subscribed to by none other than Imran Awan, the man just arrested attempting to leave the country and who is under criminal investigations for his wrong-doings while he worked as IT for dozens of Democratic congress members in the House.

So, pervs, pedophiles and channels that indoctrinate and brainwash people into the liberal social justice warrior mindset, are A-OK, but political commentary that does not fit with Google/YouTube’s ideology is now considered “extremist content,” to be hidden from view.

BOTTOM LINE

YouTube, which is owned by Google, is attempting to stifle any type of independent thinking and any conservative opinion and commentary by labeling it “extremist,” while conflating it will legitimate terrorist content.

This is how they intend to destroy Independent Media that will not push the official propaganda.

YouTube creators are also speaking out against this fascism on the part of Google/YouTube, in less than a full day, dozens of creators are already hammering YouTube, just a few of which are shown below, but a search on YouTube for the last 24 hours, has many, many more.

Pretty soon that “search result” may not “show” those criticisms because they do not conform to Google-YouTube’s preferred narrative.

Below: Styxenhammer- 148,000 subscribers.

Below: Paul Joseph Watson – 1 million subscribers

Below: Mark Dice – 1 million subscribers

Below: Richard Lewis – 93,000 subscibers

Read more at: AllNewsPipeline.com

Submit a correction >>

This article may contain statements that reflect the opinion of the author

Advertisement

Advertisements